Paper in AAAI 2021 on “Semantic MapNet: Building Allocentric Semantic Maps and Representations from Egocentric Views”

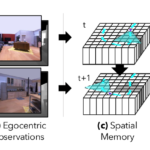

We study the task of semantic mapping – specifically, an embodied agent (a robot or an egocentric AI assistant) is given a tour of a new environment and asked to build an allocentric top-down semantic map (‘what is where?’) from egocentric observations of an RGB-D camera with known pose (via localization sensors). Importantly, our goal is to build neural episodic memories and spatio-semantic representations of 3D spaces that enable the agent to easily learn subsequent tasks in the same space – navigating to objects seen during the tour (‘Find chair’) or answering questions about the space (‘How many chairs did you see in the house?’).